A machine learning bootcamp course in your inbox. Free as a part of your paid premium newsletter subscription. Subsequent courses are viewable by subscribers only. Subscribe today! Part 3 and onwards are for subscribers ONLY

This course is worth $49 dollars all included as a part of your subscription, which costs $5 per month $49 (22% OFF) for an entire year!

Videos are coming soon. Also included as a part of the duration of your subscription.

Subscribers be sure to check the uniqtech.substack.com site all the time for contents that are published but not yet sent to your inbox, so not to cram it.

Data Structures, Data Types, Datasets

Cracking the Coding Interview, the bible for technical interviews at Google, Facebook, Microsoft, drills students on data structures and algorithms. Knowledge of data structures like tree, queue, array, each pro and cons make engineers efficient and effective. The one deep learning and machine learning data structure to know is tensor. Tensorflow, Google’s deep learning library is named after tensors. Pytorch has torch.tensor "a multi-dimensional matrix containing elements of a single data type."

Tensors are also used in Physics, relativity. But here we are using a different flavor of tensor. Trivia: CPU stands for central processing unit. A TPU by Google is a tensor processing unit. It specializes in tensor math and performance!

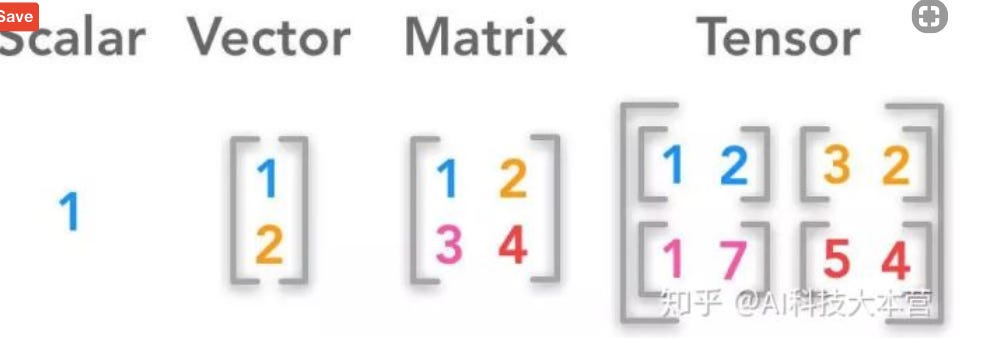

Introduction to Tensors

Tensor is just a multi-dimensional matrix. A tensor is usually a matrix of dimension 3 or higher.

Scalar 1, a vector also known as a list or array [1,2,3], a two by two matrix [[1,2],[3,4]] , tensor [ [[1,2],[3,4]], [[5,6],[7,8]] ]. A vector contains a bunch of scalars. A matrix contains a bunch of vectors. A tensor contains a bunch of matrices.

You can check the data type of a variable in python using type(variable_name). In pytorch this will return the specific type of torch.tensor. Link

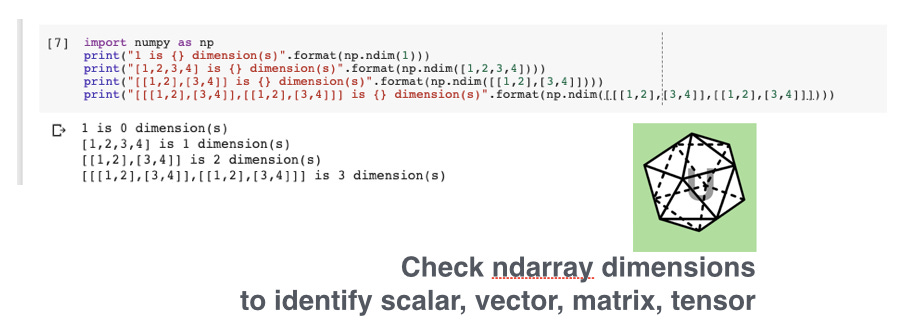

There’s no need to count by hand use the numpy.ndarray.ndim function to check the dimension of your variable.

Row Data Column Data

Later on, the matrices and tensors are used in matrix math (mainly addition and multiply), and often the dimensions and shapes of the tensors matter a lot! So we use my_tensor_name.shape to check its dimensions. By convention, it returns a tuple (row_num, col_num). It is important that each column contains a list of feature data and each row contains a collection of sample data. Here we only discusses the input data, big X, X is capped because it is a tensor. Think y = XW + b the cap and lower cases are intentional.

We have not discussed small y, y is lower case because it is a vector. X is known as the features tensor and y is the labels vectors. For example if we have many pictures of cats and dogs. The y column is just binary 1 or 0. By convention we can say 1 refers to cats, 0 refers to dogs. Column y is the true label of the dataset. What is in the X features tensor?

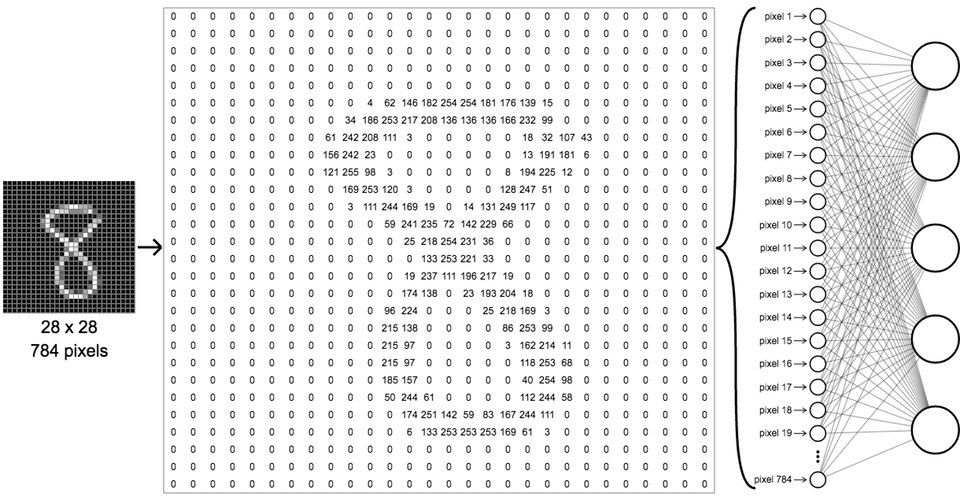

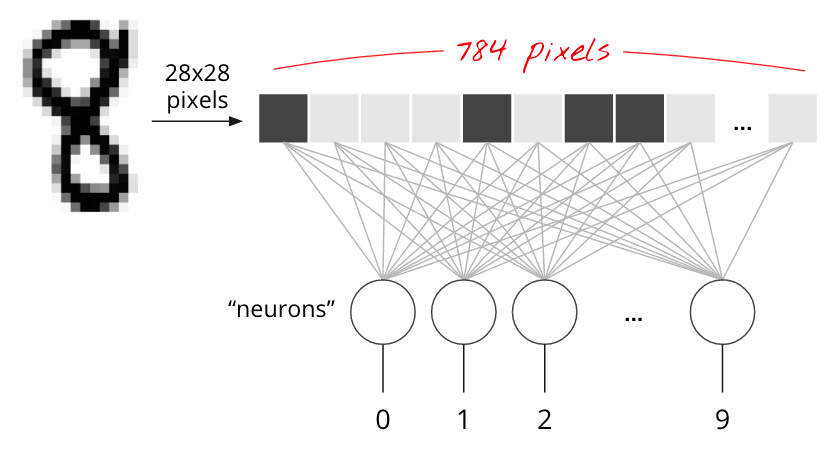

Each row is a sample data, so each row refers to one image in the dataset. The images are flattened. A 28 by 28 pixel image now have one row and 28 * 28 = 784 columns. Each column contains a pixel value of the image.

Our X tensor can be pure tabular data. Imagine an overly simplified loan survey: name, age, networth. Each row of the X tensor is now a person. Each row contains three column data: name, age, and networth. The algorithm makes a decision whether to lend to this person. The y, aka target, aka label column can be binary still 1, 0. 1 means we will give this person a loan, 0 means we won’t.

The shape of the input tensors returns a tuple (row_count, col_count). The row count refers to how many data samples we have. If the study is for 10,000 people then we will have ten thousand rows. The column count refers to the number of attributes, aka features for each data sample.

There are a lot of related yet slightly different terms like attributes and features. Don’t worry about that for now. Trivia: machine learning is an interdisciplinary field of statistics, machine learning, computer science and even linguistics and neuro biology at some point, different academic teams brought their own terminology.

Some scholars claim that all machine learning techniques can be done with statistics. Mathematicians probably can claim stats is just math operations. The reality is modern machine learning is about performance, engineering, coding, ETL in Python, R, C++. A statistician PhD may not perform so well in practical machine learning interviews. And later in this course you will see deep learning, which rose to fame recently, has existed for many years, but didn’t really take off until AlexNet.

See Images as Tensors

A color image is usually a tensor because there are three color channels Red Green Blue RGB value. A grayscale image can be a matrix because its dimensions are 1 color channel by height of image in pixels by width of image in pixels.

As previously mentioned images are generally flattened from its initial 2-dimension height and width into a flattened row vector.

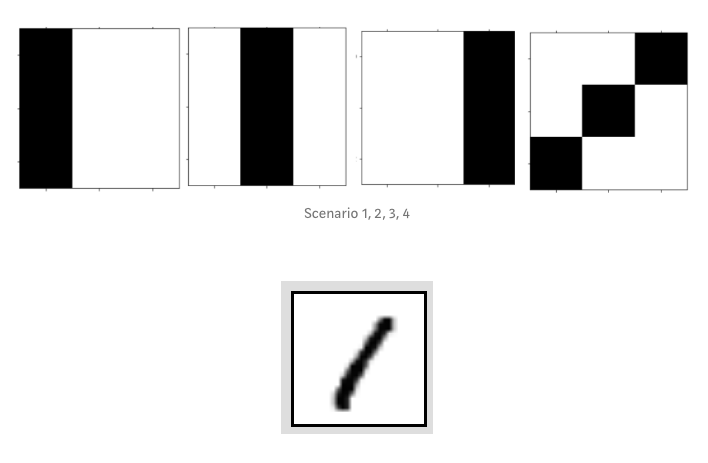

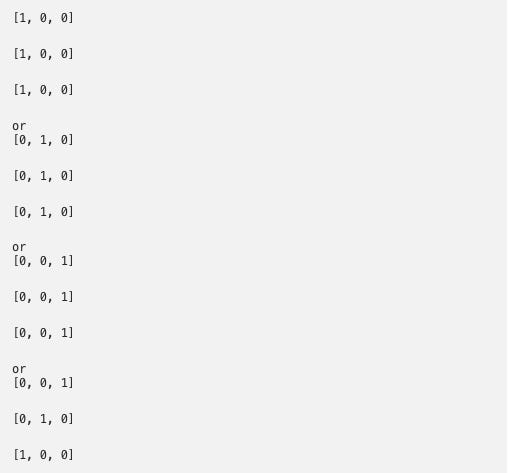

Here’s an example that we came up with. Below we have four images of the number 1.

Each mimics a 3-by-3 pixel image. The first image, the 1 only takes the left most space. The second image shifts it into center. The last image is a slanted 1. Machines need all kinds of examples to learn. It needs to know as many ways to write one as possible, else it cannot identify the number.

The above image written in matrix form is shown below.

In this simplified version, we denote any pixel that exists as 1, and 0 otherwise. Notice that the entire left column of the first matrix is 1, all other components are zero.

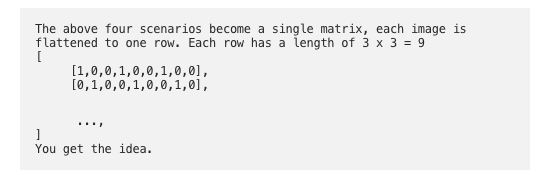

Then we flatten the four matrices for easy loading in machine learning.

What is the shape of this input tensor?

4 rows because there are only four images. Each image has 3x3=9 columns.

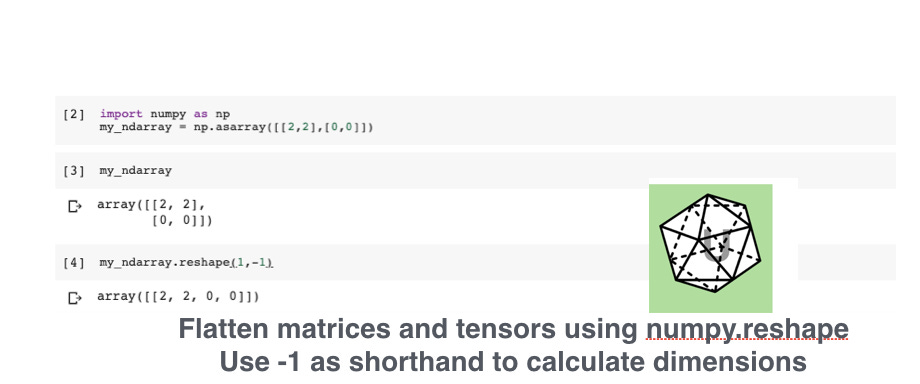

Use numpy reshape to flatten an image array:

Here’s an real life example of an image of the number eight in the MNIST dataset. Trivia: it is pronounced EM-NIST.

We want to convert any input data into numeric data, as models, algorithms, neural networks only take numeric data as inputs. A convenient data structure to hold the numeric data is tensor.

How does Pytorch tensor and tensorflow tensor differ from Numpy ndarray?

“Numpy provides an n-dimensional array object, and many functions for manipulating these arrays. Numpy is a generic framework for scientific computing; it does not know anything about computation graphs, or deep learning, or gradients.” - Pytorch documentation.

Convert Tensors to Numpy ndarrays and back

Convert between pytorch tensor and numpy ndarrays

# convert numpy ndarray to pytorch tensortorch.Tensor(my_ndarray)

# convert numpy ndarray to pytorch tensor method 2

torch.from_numpy(my_ndarray)

# convert torch tensor to numpy representation

my_torch_tensor.numpy()The API names are a bit awkward, but it only takes a short amount time to get used to it.

Tensors in Pytorch and Tensorflow

According to the Manning Pytorch deep learning book, tensors in pytorch can do more than just numpy ndarrays, it can handle parallelization and gradient calculation. Works very similarly as Tensorflow tensors.

Wikipedia says tensors : "In mathematics, a tensor is a certain kind of geometrical entity and array concept. It generalizes the concepts of scalar, vector and linear operator, in a way that is independent of any chosen frame of reference. For example, doing rotations over axis does not affect at all the properties of tensors, if a transformation law is followed. Tensors are of importance in pure and applied mathematics, physics and engineering."

For example in physics tensors is a complex concept related to relativity topics (we have no idea what that means either). But in Pytorch and Tensorflow you just have to know it is a computer science data structure that specializes in making machine learning specifically deep learning data easier to handle, process and have the right APIs for us to do ML work.